The “EnergAIzer” approach creates reliable results in seconds, permitting data center operators to effectively allocate resources and reduce wasted energy.

Due to the explosive growth of artificial intelligence, it is evaluated that data centers will consume up to 12% of total U.S. electricity by 2028, as per the Lawrence Berkeley National Laboratory. Improving data center energy efficiency is one way scientists are pursuing to make AI more sustainable.

Toward that goal, researchers from MIT and the MIT-IBM Watson AI Lab evolved a rapid prediction tool that tells data center operators how much power could be consumed by running a main AI workload on a certain processor or AI accelerator chip.

Their approach produces dependable power estimates in some seconds, unlike traditional modeling strategies that could take hours or even days to yield outcomes. Furthermore, their prediction tool can be applied to a huge variety of hardware configurations — even rising designs that haven’t been deployed yet.

Data center operators could use these evaluate to efficiently allocate limited resource throughout multiple AI models and processors, enhancing energy performance. In addition, this tool could permit algorithm developers and model provider to evaluate ability energy consumption of a new model before than they deploy it.

“The AI sustainability challenge is a pressing question we have to solution. Because our estimation technique is rapid, convenient, and provides direct feedback, we hoping it makes algorithm developers and data center operators much more likely to consider decreasing energy comsumption,” stated Kyungmi Lee, an MIT postdoc and lead author of a paper on this method.

She is joined on the paper through Zhiye Song, an electrical engineering and computer science (EECS) graduate student; Eun Kyung Lee and Xin Zhang, research managers at IBM Research and the MIT-IBM Watson AI Lab; Tamar Eilam, IBM Fellow, chief scientist of sustainable computing at IBM Research, and a member of the MIT-IBM Watson AI Lab; and senior author Anantha P. Chandrakasan, MIT provost, Vannevar Bush Professor of Electrical Engineering and Computer Science, and a member of the MIT-IBM Watson AI Lab. The studies is being presented this week at the IEEE International Symposium on Performance Analysis of Systems and Software.

Expediting energy estimation

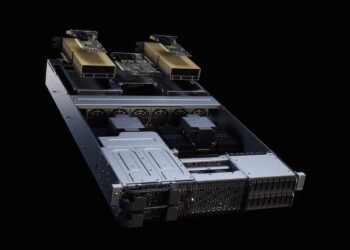

Inside a records center, heaps of powerful graphics processing units (GPUs) perform operations to train and deploy AI models. The power intake of a particular GPU will range primarily based on its configuration and the workload it’s handling.

Many traditional techniques used to are expecting energy consumption includes breaking a workload into individual steps and following how every module inside the GPU is being used one step at a time. But AI workloads like model training and data preprocessing are extraordinarily massive and can take hours or maybe days to simulate in this way.

“As an operator, if I want to compare different algorithms or configurations to find the most energy-green way to proceed, if a single emulation going to take days, this is going to become very impractical,” Lee stated.

To accelerate the prediction procedure, the MIT researchers sought to apply much less-detailed information that might be estimated quicker. They observed that AI workloads frequently have many repeatable styles. They ought to use these patterns to generate the information wanted for dependable however quick power estimation.

In many cases, algorithm developers write programs to run as correctly as possible on a GPU. For example, they use properly-structured optimizations to distribute the work across parallel processing cores and flow chunks of data round inside the most efficient manner.

“These optimizations that software developers use create a normal structure, and that’s what we’re looking to leverage,” explains Lee.

The researchers evolved a light-weight estimation model, referred to as EnergAIzer, that captures the power usage pattern of a GPU from those optimizations.

An correct assessment

But whilst their estimation became fast, the researchers observed that it didn’t take all energy expenses into account. For example, each time a GPU runs a program, there is a set energy cost needed for setting up and configurating that program. Then every time the GPU runs an operation on a chunk of data, an extra energy cost ought to be paid.

Due to fluctuations in the hardware or conflicts in accessing or transferring data, a GPU may not be capable of use all available bandwidth, slowing operations down and drawing more energy over time.

To encompass these additional charges and variances, the researchers collected real measurements from GPUs to form correction terms they applied to their estimation model.

“This manner, we can get a fast estimation this is also very correct,” she stated.

In the end, a consumer can offer their workload information, like the AI model they want to run and the number and length of user inputs to method, and EnergAIzer will output an energy consumption estimation in a matter of seconds.

The user can also change the GPU configuration or regulate the operating speed to see how such design selections impact the overall power consumption.

When the researchers examined EnergAIzer by using of real AI workload information from actual GPUs, it may estimate the power consumption with only about 8% error, that is similar to traditional techniques which can take hours to give results.

Their technique could also be used to anticipate the power consumption of future GPUs and emerging device configurations, as long as the hardware doesn’t change drastically in a brief amount of time.

In the future, the researchers want to test EnergAIzer on the newest GPU configurations and scale the model up so it may be carried out to many GPUs that are taking part to run a workload.

“To really make an effect on sustainability, we need a device which could offer a quick energy estimation solution across the stack, for hardware designers, information middle operators, and set of rules developers, so we can all be extra aware of power consumption. With this tool, we’ve taken one step toward that goal,” Lee says.